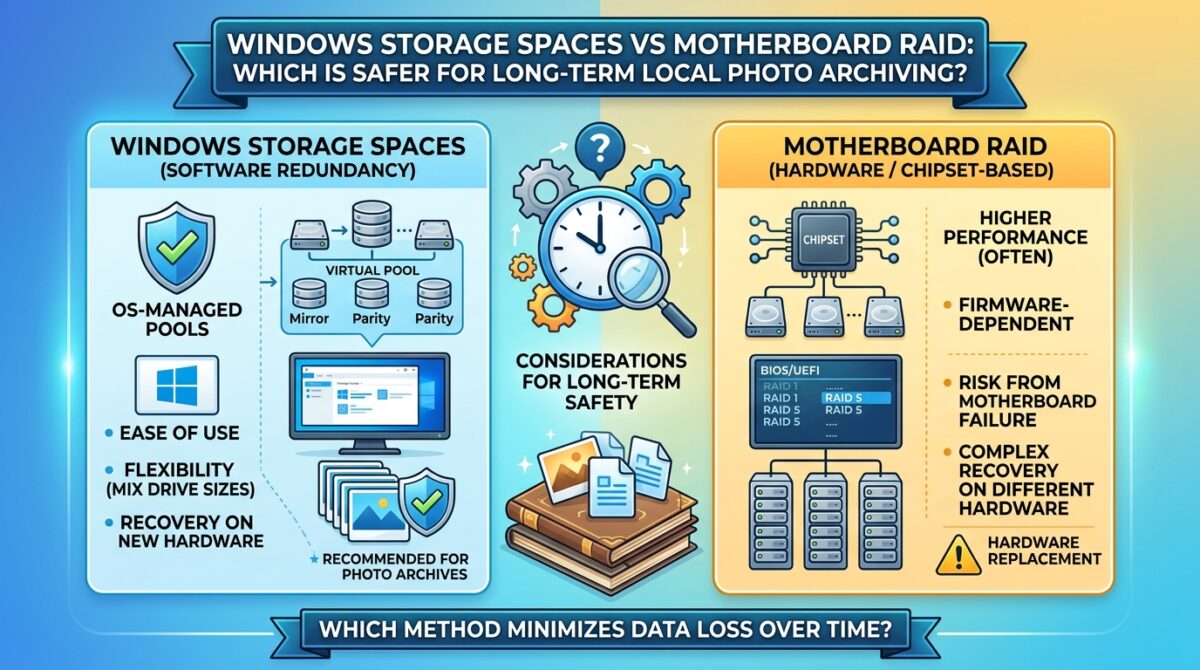

Windows Storage Spaces vs Motherboard RAID: Which is Safer for Long-Term Local Photo Archiving

Storage techniques, redundancy, and dependability are all important factors to take into account when it comes to the preservation of digital picture collections over an extended period of time. Photographers, whether they are professionals, amateurs, or enthusiasts, often amass a large number of high-resolution photographs, which makes the risk of data loss a significant issue. Both Windows Storage Spaces and motherboard-based RAID setups are examples of standard methods to the protection of local data. In terms of implementation, flexibility, and recovery choices, the two systems are distinct from one another. Both methods provide redundancy and fault tolerance. The selection of the appropriate solution is contingent upon a number of parameters, including the size of the archive, the design of the hardware, the level of risk tolerance, and the desired balance between performance and safety. In order to create a safe and long-lasting environment for the storage of photographs, it is vital to have a thorough understanding of the capabilities and constraints of each system.

The functionality of Windows Storage Spaces

Integrated within the most recent versions of Windows is a software-defined storage system known as Windows Storage Spaces. It gives customers the ability to combine many drives into a single storage area and establish different degrees of redundancy, such as two-way or three-way mirroring, to protect themselves from the possibility of drives failing. Storage Spaces, in contrast to typical RAID, functions at the operating system level, allowing for more flexibility in the combination of drives of varying sizes and brands. Additionally, it is possible for it to include parity setups, which provide fault tolerance while making effective use of storage capacity. Storage Spaces is extremely adjustable because to its software-based approach, which enables users to extend or reorganize storage pools in response to growing storage requirements. This feature is especially helpful for picture archives, which tend to grow in size over time.

Introduction to Motherboard-Based RAID: How It Operates

Users are able to combine numerous physical drives into RAID arrays, such as RAID 0, RAID 1, or RAID 5, thanks to motherboard RAID, which is often administered by the BIOS or UEFI software. Hardware-assisted RAID has the potential to provide performance advantages in some setups by reducing the amount of CPU overhead and allowing for quicker data access. Depending on the RAID level, arrays that are either mirror-based or parity-based provide protection against the failure of a single drive or numerous drives. Motherboard RAID is often considered to be more “transparent” to programs due to the fact that it functions underneath the operating system and does not depend on software drivers that are specific to the operating system. RAID may provide dependable safety and constant performance for picture archiving, despite the fact that it is often less versatile than software-defined systems.

Considerations Regarding Redundancy and Fault Tolerance

Windows Storage Spaces and motherboard RAID both provide redundancy to defend against drive failures; however, the technologies that achieve this protection are distinct from one another. Storage Spaces have the capability to provide three-way mirroring, which enables two drives to fail without causing any loss of data. This is in contrast to the majority of motherboard RAID implementations, such as RAID 1 or RAID 5, which normally only protect against the failure of a single drive. In Storage Spaces, parity configurations are versatile; however, depending on the size of the pool and the speed of the drive, they may have longer rebuild times. In many cases, motherboard RAID is able to reconstruct arrays more quickly; but, it may be more susceptible to data loss in the event that many failures occur simultaneously, especially in lower-level RAID configurations. During the process of preserving priceless images, it is essential to do an evaluation of the needed level of fault tolerance.

Adaptability and scalability within the context of expanding archives

With Windows Storage Spaces, users are able to add new drives to the pool without having to rebuild the storage array. This indicates that Windows Storage Spaces excels in flexibility. The fact that it is compatible with disks of varied capacity makes it much simpler to scale storage as the picture collection becomes smaller. When compared to other types of RAID, motherboard RAID often necessitates the use of equal or comparable-sized disks in order to retain maximum performance. Additionally, when boosting capacity, it may be necessary to rebuild the array. It is possible that this may cause disruptions and create significant threats throughout the process of rebuilding. The ability to increase storage in a secure and efficient manner is a significant benefit that Storage Spaces offers over conventional hardware RAID. This feature is especially beneficial for customers who have expanding archives.

Optional Recoveries and the Integrity of the Data

When it comes to long-term picture preservation, data recovery and integrity are key aspects to take into account. Storage Spaces incorporates features such as integrity streams and automated repair, which are able to identify instances of corruption and make an effort to rectify them via the use of redundancy. Furthermore, this offers an extra layer of security against silent data deterioration, which is something that may happen over the course of time in huge archives. When it comes to error detection, motherboard RAID depends on the array’s hardware controller or firmware. However, this may not always identify minor contamination. Although RAID often offers dependable drive-level redundancy, it may need the use of specialist recovery techniques in the event that data becomes inaccessible. This is particularly true in situations when several failures or controller difficulties have occurred.

Specifications Regarding Performance

A variety of factors, including the setup and the kind of workload, might influence performance. When importing or editing huge picture batches, it is generally helpful to use motherboard RAID arrays, especially RAID 0 or RAID 10, since these arrays often give quicker sequential read and write performance. Due to the fact that Storage Spaces is a software-defined solution, it is dependent on the central processing unit (CPU) and operating system (OS) for processing. This may result in somewhat decreased performance when high workloads are present; however, current systems often manage this without undergoing substantial damage. Both systems have the potential to be adequate for picture archiving, which is a process in which access speed is crucial but not as vital as fault tolerance. However, users should take into consideration the requirements of their workflow when selecting between the two systems.

Making Useful Suggestions Regarding the Archiving of Photographs

Whether you choose to use Windows Storage Spaces or motherboard RAID for long-term local picture preservation is dependent on the priorities you have. Those customers who are looking for flexibility, the option to combine disk sizes, and extra data integrity checks will find Storage Spaces to be a suitable solution. This makes it an excellent choice for huge archives that are expanding and where there is a worry of silent data corruption. When users are utilizing identical SSDs, motherboard RAID is an option that is suited for those who prioritize slightly improved performance and set up ease. Because no RAID or storage pool will guard against catastrophic events, theft, or unintentional deletion, it is vital to keep frequent backups to different devices or off-site storage. This is true regardless of the system that is used.

Striking a Balance Between Performance, Flexibility, and Safety

In the end, Windows Storage Spaces and motherboard RAID both provide ways to safeguard local picture archives; nevertheless, the manner in which they strike a balance between safety, expandability, and performance is the primary difference between the two. Because of its improved flexibility and built-in data integrity safeguards, Storage Spaces is a very useful tool for managing ever-evolving collections of priceless images. In some setups, motherboard RAID delivers dependable redundancy and has the potential to give quicker access speeds. However, it does not possess the same versatility and does not provide OS-level security against silent corruption. Users should carefully evaluate their storage requirements, the expansion of their archives, and their level of comfort with possible hazards in order to pick the solution that is best suitable for the preservation of priceless digital memories over an extended period of time.